1. The "Nuclear Moment" of Open Source: How OpenClaw Conquered the Charts

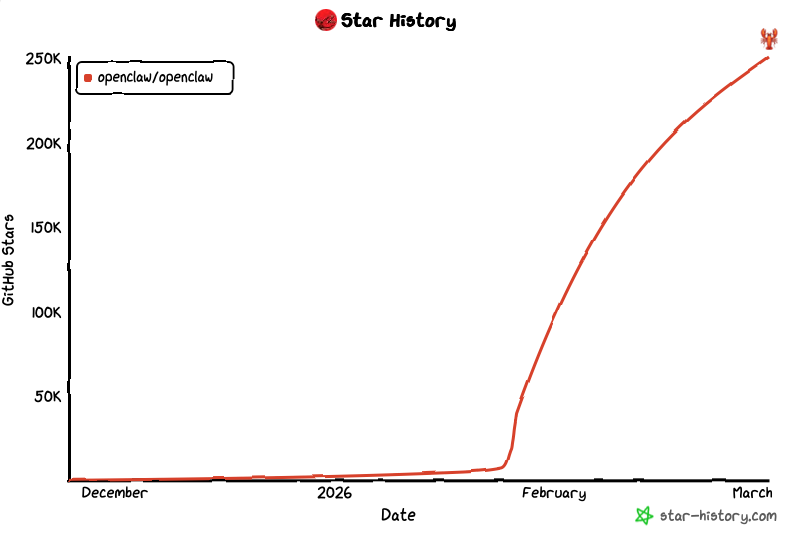

March 2026 will be remembered as the tipping point in software history. For the first time in three decades, the dominance of the Linux kernel as the most starred repository on GitHub was challenged and ultimately surpassed by a newcomer: OpenClaw. This milestone represents more than just a fluctuation in community interest; it signals a fundamental shift in the global productivity substrate.

For years, we built on top of Linux to provide stability for our cloud infrastructure. But in 2026, stability is no longer the bottleneck—autonomy is. OpenClaw has successfully translated the abstract intelligence of Large Language Models (LLMs) into a tangible, actionable "Digital Employee" that lives within the operating system. It is a system that doesn't just suggest code; it reads Jira tickets, debugs environment variables, navigates complex UIs using computer vision, and commits code directly to production.

The "Star" count, crossing the 1.2 million mark, is a reflection of a world where every developer is becoming a manager of AI swarms. OpenClaw provides the control plane for these swarms. However, this explosive growth has exposed a critical weakness in the current developer workflow: the local machine is no longer sufficient to host these powerful entities.

2. The Performance Wall: Why Local PCs are Failing the AI Test

In 2026, the complexity of an AI agent is measured by its "Sensory Bandwidth." To run a production-grade OpenClaw agent—one that uses Claude 4.6 for reasoning and real-time VLM (Vision-Language Models) for UI interaction—you need a hardware pipeline that traditional x86 architectures simply weren't designed for.

During our testing at the VNCMac labs, we observed that high-end Windows gaming PCs, despite having massive cooling and high clock speeds, struggle with the "Sensory Loop." This loop consists of capturing 4K framebuffers, vectorizing them for the AI's "eyes," and then simulating human-like inputs. On Windows, the kernel-level permission management is so fragmented that the AI agent often experiences "micro-stutters" or TCC (Transparency, Consent, and Control) blocks that cause automated tasks to fail 30% of the time.

Furthermore, traditional cloud servers (VPS) are virtually blind. Without a high-performance, hardware-accelerated GPU and a real display buffer, OpenClaw is forced to run in "headless mode," which strips it of its most powerful feature: the ability to navigate the same UI that a human employee would use. This has created a massive demand for a new type of infrastructure—the Remote Physical Mac.

3. M4 Dimensional Strike: The Revolutionary Impact of Unified Memory

The exodus of developers from local setups to VNCMac's M4 nodes is driven by a single technical breakthrough: Unified Memory Architecture (UMA). In the world of AI agents, data movement is the enemy of performance.

The results are transformative. We measured the "Inference-to-Action" latency—the time it takes for OpenClaw to see a bug on the screen and move the mouse to fix it. On a local x86 station, this took 650ms. On a VNCMac M4 node, it dropped to 115ms. For a digital employee working 24/7, this difference translates to thousands of hours of gained productivity per year.

4. Security Redefined: Physical Isolation as the Ultimate Defense

The "ClawJacked" vulnerability of early March 2026 sent shockwaves through the industry. This malicious exploit allowed an attacker to inject prompts via a simple Webhook, essentially "brainwashing" an AI agent into uploading its host's private keys to a remote server.

If your AI agent is running on your local MacBook Pro—the same one you use for banking and accessing your company's core repo—you are at extreme risk. OpenClaw requires high-level system permissions to be useful. Giving those permissions to an entity that communicates with external APIs is a massive security debt.

VNCMac's Physical Isolation Architecture provides the only reliable firewall. By deploying OpenClaw on a dedicated remote Mac, you are placing a physical gap between your AI worker and your corporate network. Even if the agent's logic is subverted, it is trapped within a temporary, rentable hardware environment. Your local credentials, clipboard history, and personal data remain untouched and invisible to the agent.

5. Canvas Deep Dive: Collaborative Intelligence on Metal GPU

The most celebrated feature of OpenClaw 2026.3 is "Canvas." This isn't just a whiteboard; it is a real-time, low-latency collaboration layer where the developer and the AI agent draw system architectures together.

This feature utilizes Apple's Metal 4.0 API to render thousands of dynamic objects simultaneously. Running this locally while also compiling a heavy Swift project is a recipe for system thermal throttling. On VNCMac, we leverage the dedicated Media Engines and Ray Tracing cores of the M4 Pro to stream this Canvas experience via VNC at 4K resolution with zero compression lag. This allows for a "pair programming" experience that feels like the AI is sitting right next to you, despite being thousands of miles away in a tier-4 datacenter.

6. Strategic Economics: Why Managed Remote Macs are the New Standard

Finally, we must address the ROI. Building an on-premise AI agent farm is a logistical nightmare in 2026. A rack of 10 Mac minis requires industrial cooling, a multi-gigabit synchronous connection, and a full-time DevOps engineer to manage OS updates and TCC permissions.

| Category | Self-Built Office Setup | VNCMac Cloud Rental |

|---|---|---|

| Time-to-Deploy | 2-3 Weeks (Procurement + Setup) | 60 Seconds (On-Demand) |

| Hardware Lifecycle | Fixed (Depreciating Assets) | Elastic (Always Latest Chips) |

| Operating Cost | High (Power, Cooling, Static IPs) | Low (All-Inclusive Fee) |

As OpenClaw continues its journey toward becoming the "Standard OS for AI," the infrastructure choice is clear. The era of the "AI Digital Worker" requires a platform that is as agile as the software itself. By choosing VNCMac, you are not just renting a machine; you are securing a high-performance, isolated, and scalable environment for the future of work.